Preface

This document will provide you with all of the information that you need to install, manage and administer any of the QVD components within a QVD solution. As an open-source product, QVD is constantly growing and being improved. We endeavour to keep our documentation as complete as possible and encourage readers to notify us of ways that the documentation can be improved. If you have any queries or suggestions, please email us at info@theqvd.com.

The document is broken into three main parts. The first part discusses the core components that make up a solution, how they interact and how they are installed and configured. The second part deals with integration issues and how to tweak behaviors within QVD to achieve better performance or to be more scalable. The third part is dedicated to providing you with all of the information that you may need to create and manage the Operating System Disk Images and Virtual Machines that get loaded into each virtual desktop.

Additionally, we provide a Bibliography to reference external material that may help you to gain a better understanding of the different technologies involved in a QVD solution. We also provide a Glossary of commonly used terms, that may help you to understand some of our own terminologies and some less frequently encountered terms when you come across them.

1. What is QVD?

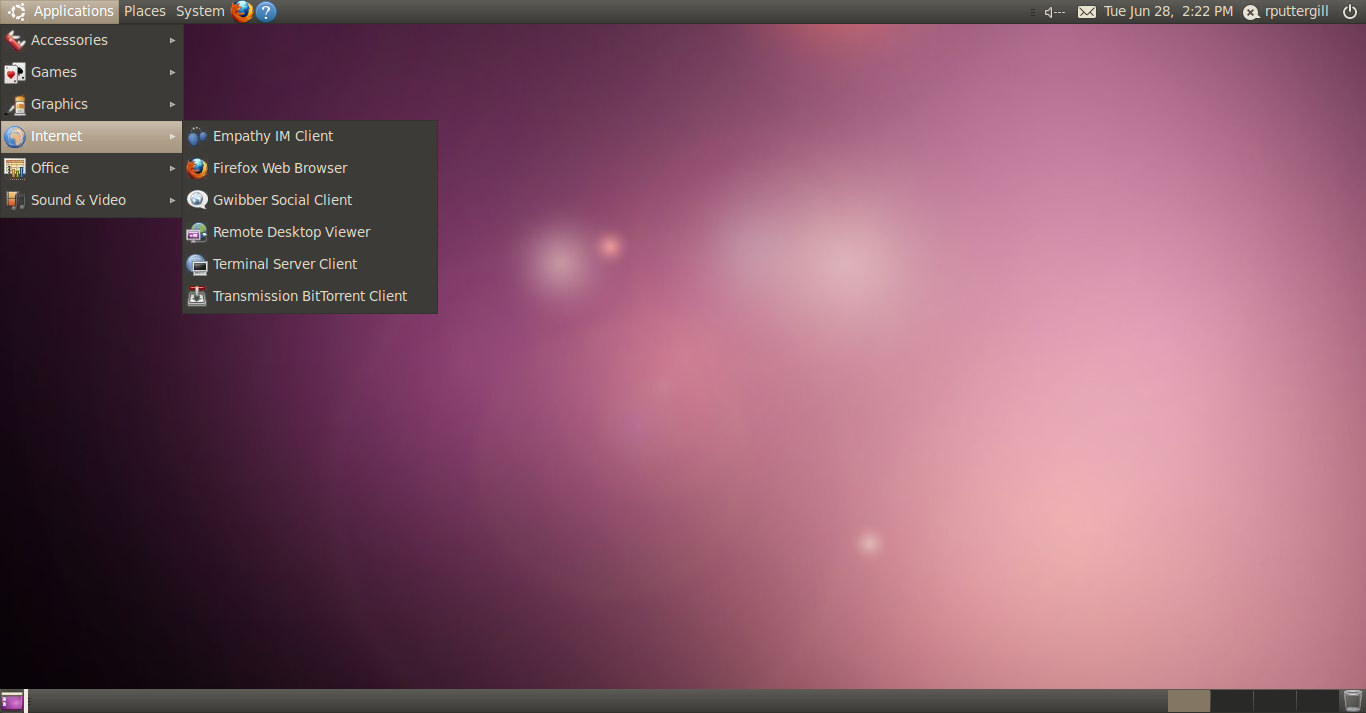

QVD (Quality Virtual Desktop) is a Linux focused VDI (Virtual Desktop Infrastructure). The software is designed to entirely virtualize the Linux desktop, so that client systems are able to connect to a central server to load their desktop environment and applications. This means that when users work from their local machine, all of the programs, applications, processes, and data used are kept on the server and run centrally. Virtualization offers a number of benefits:

-

Users can switch between computers on a network and continue to work as if still located at the same desktop, with full access to all of their applications and data

-

Administrators have greater control over the applications that are installed on user’s systems, and are able to manage user data more easily to perform backups and virus scans etc

-

It is easier for Administrators to provision new desktops and to deploy applications for new users

-

There is reduced downtime in the case of hardware failures

-

Users can make use of a variety of different devices to access their desktop and applications, including laptops, PCs and smartphones

-

Users can work securely with the same desktop and applications from a remote location without the requirement for a VPN

-

Improved overall system and data security

-

Reduced costs of hardware, maintenance and administration

The QVD Server virtualizes each Linux Desktop. This can be achieved by using one of two virtualization technologies. Most commonly the Linux Kernel Virtual Machine (KVM) is used as a complete bare-metal hypervisor, however as of QVD 3.1, it is also possibly to take advantage of Linux Containers (LXC) to achieve operating-system level virtualization. This virtualization helps to keep each user’s environment as its own discrete entity, to improve security and stability. Virtualization allows you to serve multiple Operating Systems or environments to your users, depending on their requirements. These are loaded as independent Images on the QVD Server. In general, you will only load one or two images for all of your users. These images provide the base operating system and workstation environment, which are replicated for each virtual machine. When a user connects to the server, making use of the client application, a Virtual Machine is started solely for that user. This provides a jail that prevents any inappropriate system behaviour from affecting other users. When the user disconnects, the Virtual Machine is stopped. This means that if the user’s environment has somehow become problematic, a disconnect can revert the environment to its original state. This provides a much better level of security than if a user was working on an independent workstation.

In order to maintain user data, such as desktop settings, documents and other user specific information, there are two options. The first, and more common approach, is to store this information on an NFS share. In this way, data can be stored on a NAS device or within a SAN, where it can be easily managed. A second option is to load a second image into the virtual machine. This image is persistent, in that it can be updated by the user, and the changes are stored for each time the image is reloaded. Either approach is equally valid. By keeping user data separate from the core image, QVD helps to ensure that in the event that a core image is corrupted or in the event of system failure, you are able to minimize the time needed for disaster recovery.

The desktop is accessed from each workstation, making use of a client that uses the NX protocol to communicate with the server and to deliver the desktop and applications to the client. The NX protocol is used to handle remote X Windows connections and provides superior compression to allow for high performance even when accessing the desktop over a low-bandwidth connection. Furthermore, the QVD is able to encapsulate the NX protocol with SSL to secure connectivity so that users can work in a safe and secure manner, even if accessing their desktops from remote locations. QVD provides client software to run on a variety of base operating systems and devices, from Linux to Windows. This means that wherever you are, regardless of the system that you have access to, you can run the client application to access your Desktop.

1.1. Some Notes About This Manual

In general, it is assumed that most users of the QVD will take advantage of the KVM virtualization offered within the product. As a result, the majority of this guide assumes that you will configure the product in this way. Where users select to use LXC to achieve virtualization, there may be some differences in configuration. Where these are significantly important, we have included information for both virtualization platforms. However, we have also included a separate chapter on LXC virtualization which attempts to provide some additional guidance to users who choose to explore this option.

In the same line, although we provide packages for other Linux distributions such as SUSE Linux Enterprise Server (SLES), we assume that the majority of our users will use Ubuntu Linux. As a result, many of the commands in this guide, along with the locations of configuration files etc, are generally provided with the assumption that you are using Ubuntu Linux. Where it is important the users of SLES are aware of differences, we have attempted to also provide this information as clearly as possible.

Core Components

In this part of the manual, we discuss the core components that make up a QVD Solution. We will explain the architecture of a solution, and we will cover the installation and configuration settings specific to each component in detail.

1. Components and Architecture

1.1. Introduction to QVD Components

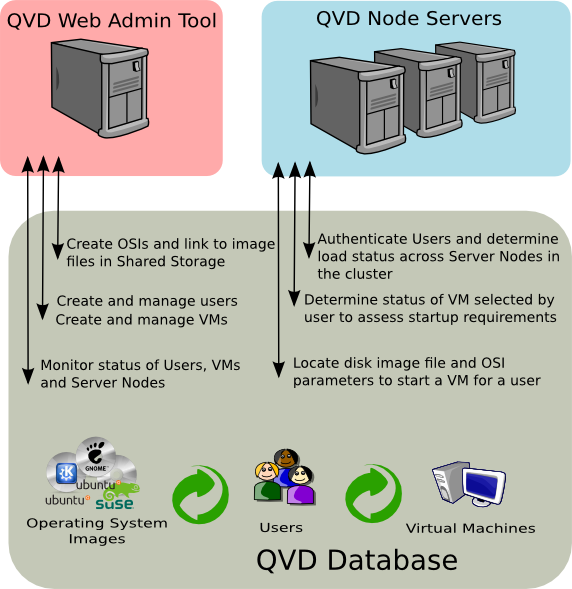

QVD 3.5.0 is comprised of a number of core components that work together to create a complete QVD solution. While not every single component is necessarily required in order to create a functioning environment, it is advisable that all components are actually installed to ensure ease of management and to protect the stability of the platform.

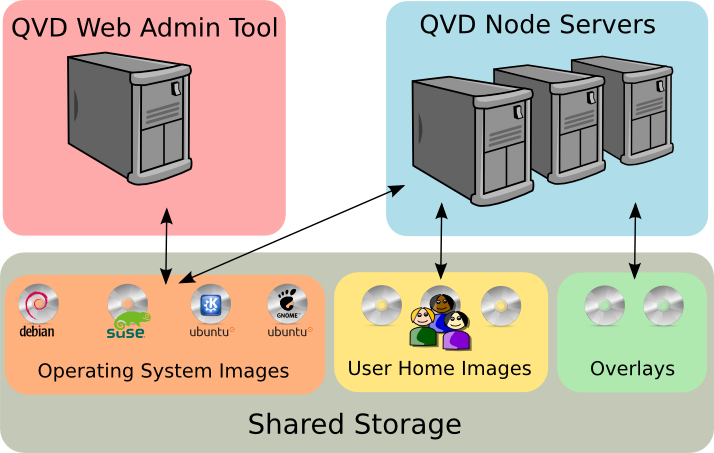

There are three major server side components:

-

QVD server,

-

Administration Server, and

-

PostgreSQL DBMS.

Ideally, each of these should be stored on a dedicated host for stability reasons, although it will become clear later that these components will have access to some shared resources in order to function properly.

While it is likely that you will only have one Administration Server and one PostgreSQL Database system in your environment, it is possible to have any number of QVD Server Nodes. Therefore, most deployments of the QVD will also include the following components:

-

Load Balancer

-

Shared Storage Facility (e.g. NFS etc)

By using a Load Balancer in front of your QVD Server Nodes, clients connections can be balanced across healthy Server Nodes in order to access a virtual desktop. This reduces the amount of configuration within the client software and also ensures a much healthier environment to serve virtual desktops.

Since each server node will require access to particular shared resources, such as the disk images that will be loaded into a virtual machine, and user home data, a shared storage facility is generally set up in order to allow all of the server nodes to have access to this shared data.

Within each Disk Image, that is loaded into a Virtual Machine out of which the Virtual Desktop is served to an end user, there is an additional component which becomes active for each Virtual Machine that is started:

-

QVD Virtual Machine Agent

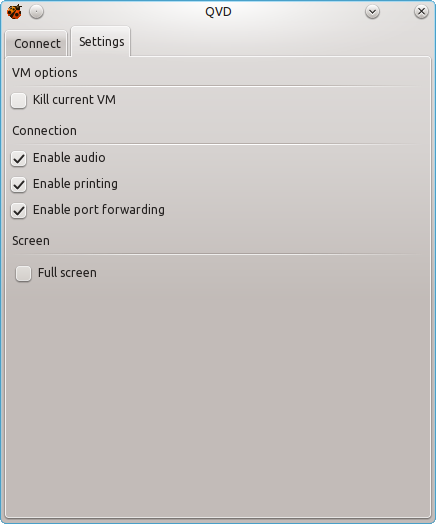

The QVD Virtual Machine Agent (VMA) is responsible for accepting connections from the client via a QVD Server Node. It facilitates access to the desktop environment running within the virtual machine, including the ability to configure printer access and to configure the virtual machine to stream audio to the client.

Finally there is the client side component:

-

QVD GUI Client

The client is packaged for a variety of Linux base operating systems, and for Microsoft Windows. A client for Android platforms has also been released.

The client software can be installed on as many host systems as required.

1.2. QVD Architecture

In most production environments, the architecture of a QVD environment is such that several QVD Server Nodes will be running in parallel to each other. The QVD environment is designed to handle a fully load-balanced environment, so that you can have a High Availability solution.

1.2.1. Internal Elements

A QVD Server Node is composed of a single binary, the HKD or House Keeping Daemon. This brokers all connections with a layer-7 router which ensures that all clients are routed to the correct virtual IP address that is configured for the Virtual Machine that has been created for the connecting user. It is also responsible for authenticating the user prior to connection, and for establishing the client session. The HKD starts a listener on each QVD node and forks each incoming connection once it is established.

The HKD tracks the status of virtual machines. It is responsible for starting and stopping virtual machines as well as monitoring the health of each virtual machine and updating status information within the QVD Database, so that other Nodes and the administration tools are able to function accordingly. In general, the HKD is responsible for managing virtual machine status.

1.2.2. HKD Behaviour

The House Keeping Daemon is responsible for managing virtual machine states based on information that it detects within the QVD Database. The HKD regularly polls the QVD database to determine the status of each Virtual Machine. If the status has been changed by another element such as the web administration tool, the HKD is responsible for enacting the appropriate commands to effect the status change.

When QVD is configured for KVM virtualization, the HKD runs a KVM instance for each virtual machine that needs to be started, and provides startup options based on information obtained from the database.

When QVD is configured for LXC virtualization, the HKD will first check to

determine whether the image file has been uncompressed into the basefs folder

in the shared storage area, and uncompresses the image file if this has not

already been done. The HKD then uses the fuse-unionfs module

[<It is

possible to use alternative methods to create a union style mount, including

using the aufs module which can offer significant performance improvements.

Users have also succeeded in using bind mounts to achieve the same purpose.>]

to perform a union mount of the image in the basefs folder with an

automatically generated overlay file system and home file system. This mount is

performed inside the rootfs folder in the shared storage. Finally, the HKD will

load the newly mounted image into an LXC instance.

As the Virtual Machine instance starts, the HKD will check that the image boots correctly, that it has network connectivity and that the QVD-VMA is running within the virtual machine. If any of these checks fails, the HKD will change the state of the virtual machine to blocked within the QVD Database. After a short period, the HKD will kill the running virtual machine.

During each loop run that the HKD performs it will check the health of all running virtual machines, it checks the database to determine if there are any VM state changes, implements any changes to VM state, and updates information in the database pertaining to VM state.

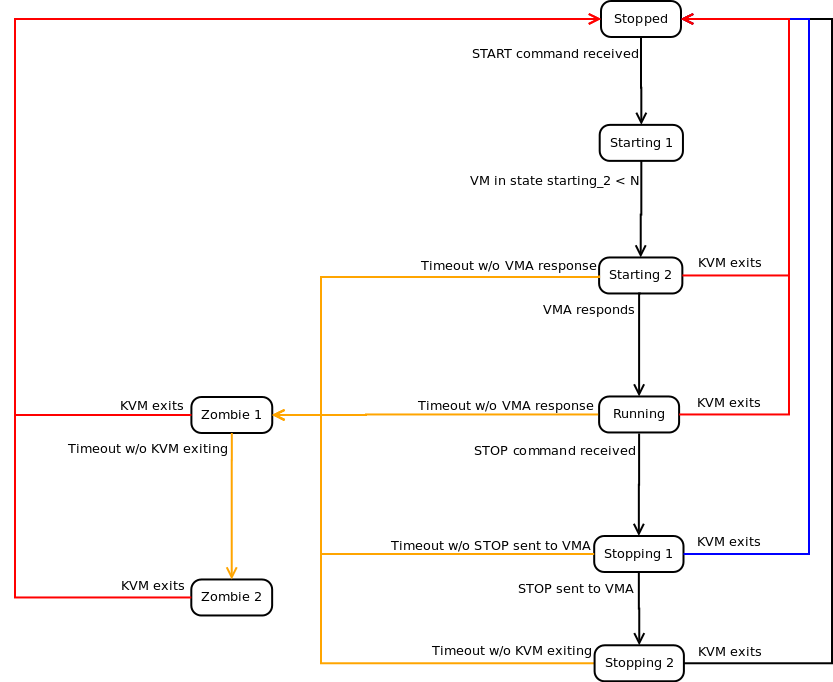

As per the diagram above, these are typical examples of the different Machine States that the HKD will return for a Virtual Machine starting up using KVM.

-

Stopped: the VM is not running on any host

-

Starting 1: the HKD has received the start command but is waiting until it has the resources available to move to the next machine state

-

Starting 2: the VM has been started but the boot process has not yet completed

-

Running: the VM is running on a host

-

Stopping 1: the HKD received the stop command but is waiting for the VMA within the VM to respond to the request

-

Stopping 2: the VMA has responded to the stop request and the VM is in the process of shutting down

-

Zombie 1: The VM is running but is not responding, a TERM signal has been sent to the process

-

Zombie 2: The VM is running but is not responding, a KILL signal has been sent to the process

DI Tags

QVD supports the ability to "tag" Disk Images. This feature is important, because it allows you to easily change Disk Image versions for Virtual Machines. If you are making a change to the Disk Image that a large number of users are already making use of, you can assign it a new DI Tag. You are then able to roll the change out to as many virtual desktops as you require by changing the DI Tag that the Virtual Machine is set to load. If something goes wrong, rollback is as simple as updating the DI Tag being used by a Virtual Machine.

When the HKD loads a Virtual Machine, it checks the DI Tag for that Virtual Machine within the QVD-DB, and ensures that the correct Disk Image is used during the startup phase. Therefore, if a DI Tag is changed while a Virtual Machine is running, the change will not be implemented until the Virtual Machine has been restarted.

1.2.3. QVD Client and L7R Server Node Interactions

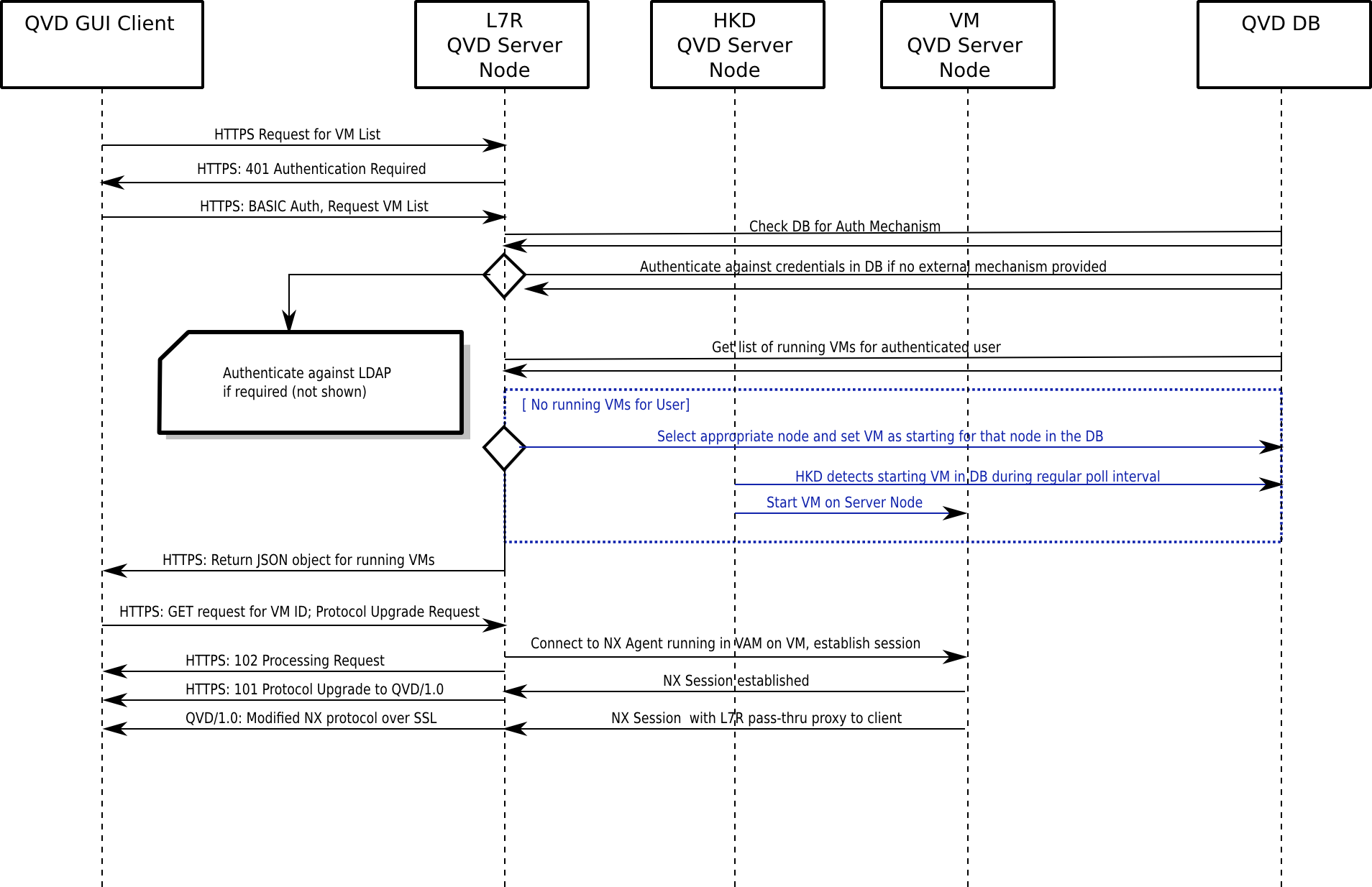

The QVD Client connects directly to the L7R component of the HKD. The Client initiates a connection over HTTPS, where it is prompted to provide HTTP BASIC authentication credentials.

The L7R will then connect to the backend database to determine how authentication should take place (i.e. locally or using an external LDAP directory) and take the appropriate steps to perform the authentication process. The L7R will return an HTTP OK response if the authentication was successful, or will return a 401 Unauthorized if authentication fails.

Once authenticated, the client requests a list of virtual machines that are available to the user. The server responds with a JSON formatted list of virtual machine IDs and their corresponding status. The client selects an appropriate virtual machine to connect to and submits a GET request with the ID of the virtual machine at a standard GET variable. It also requests a protocol upgrade to QVD/1.0 within the HTTP request headers.

The L7R performs the necessary steps to ensure that the virtual machine is up and waiting for connections using the NX protocol. If the virtual machine is not running on any server node, it will determine which node it should be started on and automatically start a virtual machine for that user. In all events, the L7R will determine which node is running the virtual machine and will forward all requests to this machine for all further handling, including checking to see that an NX session can be set up. During this process, the L7R will return a series of HTTP 102 responses indicating the progress of the processing required to establish a connection with the Virtual Machine. If the virtual machine is available, the L7R establishes a connection to the nxagent running on the virtual machine and becomes a pass-thru proxy for the NX session. Once the session is set up, the L7R will issue a final HTTP 101 (Switching Protocols) response to the client, and the protocol for all future interactions with the client will be upgraded to the NX protocol, secured using SSL. The L7R updates the QVD Database to set the status for the virtual machine to indicate that a client is connected.

From this point onward, all communications between the client and the Virtual Machine are performed over the NX protocol via the L7R. When the client disconnects, the L7R updates the QVD Database to represent the change in virtual machine status.

The process flow is indicated in the following diagram:

1.2.4. L7R in an HA load-balanced environment

As already mentioned, Server Nodes are designed for a fully load-balanced environment. In order to cater for this, the L7R element of each HKD is capable of redirecting traffic for a particular virtual machine to any other Server Node in the environment.

The usual configuration is such that a Virtual Machine is started for each user on any one of the server nodes. When a user is authenticated by any L7R within the solution, the L7R determines which server node is currently running a virtual machine for the authenticated user. This is achieved by querying the QVD Database. If a running virtual machine is detected within the environment, the L7R will reroute all traffic for that connection to the appropriate server node.

If no virtual machine is currently running for the user, the L7R makes use of an internal algorithm to determine the most appropriate node to start a new virtual machine for the user. This algorithm is based on assessing which node has the highest quantity of free resources, calculated as the weighted sum of free RAM, unused CPU, and a random number to bring some entropy to the result.

When an appropriate node has been selected, the database is updated so that a virtual machine will be started by the HKD on the correct host. The L7R will then reroute all traffic for that connection to the server node that has been selected to run the new virtual machine.

1.2.5. Virtualization Technologies

QVD supports two different virtualization technologies: KVM (Kernel Virtual Machine) and LXC (Linux Containers). Each virtualization technology comes with its own set of advantages and will prove more useful for particular use cases. Therefore, a good understanding of your own requirements and an understanding of these two technologies will help you to determine how to configure your QVD deployment.

KVM Virtualization

The Kernel Virtual Machine (KVM) is a fully featured hypervisor that runs inside of the kernel of the linux host operating system. The hypervisor ensures absolute separation from the underlying host operating system, allowing you to load completely different operating systems and distributions into each virtual machine and expect them to function just as if they were running on completely separate hardware.

While there is some debate over whether KVM is actually a Type-1 bare-metal hypervisor, since it does require the linux Kernel in order to function, most virtualization experts agree that combined with the linux Kernel, KVM functions in exactly the same way as any other bare-metal hypervisor, such as Xen or VMware’s ESXi. In fact, in the recently published SPECvirt 2011 benchmark reports, KVM came second in performance only to VMWare ESX, indicating a high level of viability as a commercial-grade virtualization platform.

Since KVM uses absolute separation, it is much easier to configure and manage than LXC. However, although it offers competitive performance to other hardware hypervisors, each virtual machine is necessarily running its own kernel. Resources need to be dedicated to each virtual machine, whether they are being used or not. In this way, KVM is not as efficient as LXC, but offers much greater flexibility and ease of management.

LXC Virtualization

Linux Containers (LXC) provide Operating system-level virtualization. In this way, they act as an alternative to the full hardware-level virtualization provided by the KVM hypervisor. LXC behaves in a similar manner to a chrooted environment within Linux, but offers a greater level of isolation and management of resources between containers through the use of namespaces and cgroups. For instance process IDs (PIDs), network resources and mounts for each container will be isolated from other containers and can be logically grouped together to apply resource management rules and other policies specific to the container. This allows you to gain many virtualization benefits while keeping down overall resource requirements and by re-using the same kernel across virtual machines. LXC is fully supported by the Linux Kernel, and has been included in QVD since version 3.1.

1.2.6. Virtual Machines and VMA

Virtual Machines are started by the HKD on a per-user basis. In a production environment, it is usual for there to be a number of different QVD Node Servers running in parallel. Virtual Machines are started for different users across the different QVD Server Nodes, so that there is one virtual machine instance running for each user that needs to be provisioned. If a virtual machine has not been started for a user, and the user connects and authenticates against an L7R, the L7R will use its load-balancing algorithm to determine which node should run the user’s virtual machine and the database will be updated so that the virtual machine will be started on the appropriate node.

When Virtual Machines are started, they load an "Operating System Flavour" or OSF. The parameters for the Virtual Machine are determined by data stored within the QVD-DB for each OSF. In general, the OSF’s Disk Image is loaded from a network share. There is a separate chapter within this document dedicated to creating, editing and managing OSFs.

Virtual Machines make use of overlays in order to best utilize different elements of the Guest operating system, and in order to make particular elements persistent. For instance, while write activity is not persistent within the actual OSF that is loaded, it is important that data written to the user’s home folder or desktop is stored for future connections to the virtual desktop.

Within instances of QVD that make use of KVM virtualization, this is achieved by storing the user’s home directory within a qcow2 image. This is loaded over the home directory within the OSF that is running in the Virtual Machine.

In instances of QVD that make use of LXC virtualization, this is achieved by

taking advantage of unionfs mounts

[<Once again, note that it is

possible to use alternative methods to create a union style mount, including

using the aufs module or by simply using bind mounts to achieve the same

purpose.>]

. The user’s home data and any overlay data is stored within a

separate directory outside of the image used for a virtual machine. These

folders can then be mounted over the base image at runtime, in order to create

a container specific to each user and virtual machine.

The qcow2 image or user home directory is usually stored on a network share, so that it is accessible to any server node within the environment. If the user’s virtual machine is later started on a different Server Node, the user’s home directory can be loaded at run time and the user’s modified data will always be available to the user. Overlays can also be used to make other data such as log and tmp files persistent from a user perspective.

Depending on the virtualization technology configured within QVD, virtual machines will be started either using KVM or LXC. However, it is important to understand that the images for these two different technologies are very different and it is not possible to switch between virtualization technologies.

Once running, each Virtual Machine must load the QVD-VMA (Virtual Machine Agent) in order to function properly. The VMA will ensure that the nxagent is available so that a client is able to connect to the virtual desktop that is created for the user. It also returns different states that helps the L7R to determine user state, which can be fed back to the QVD-DB. When an OSF is created, it is fundamentally important that the QVD-VMA is installed and configured in order for QVD to work at all.

1.3. QVD Administration

QVD Administration can be performed using one of two tools:

-

qa: a command line utility that can be installed on any machine that has the connectivity to the QVD-DB and that is configured appropriately for this purpose.

-

QVD-WAT: a Web-based Administration Tool that allows an Administrator to remotely access the solution and to perform a variety of administrative tasks using a standard web browser.

Both tools require access to the QVD-DB and will need to be configured for this purpose. Nearly all of the commands that can be performed through either of these tools will simply change values for entities within the QVD-DB. Actions are then carried out by the various QVD Server Node elements based on the changes made within the QVD-DB.

The QVD Administration tools are also be used to load new images into QVD and to configure their runtime parameters. In order to facilitate this functionality, these tools need access to the folders where these images are stored and accessed by the Virtual Server Nodes. Usually, this access is provisioned over a network file share such as NFS.

2. Base QVD Configuration

All QVD Components, except the QVD Client and the QVD VMA, make use of the QVD-DB. As a result any system that makes use of a QVD Component should have a QVD Configuration file that provides the information required to connect to the database.

This information should be configured within a file that is stored at /etc/qvd/node.conf. This path is not created automatically. You can either create it manually, or you can use the provided configuration template by doing the following:

root@myserver:~# cp -R /usr/share/qvd/config /etc/qvd

The node.conf file should at least contain the following:

#

# QVD Node Configuration

#

nodename = mycomputer

# Database connection information.

# database.host: where the QVD database is found

# database.name: the name of the QVD database

# database.user: the user account needed to connect

# database.password: the password needed to connect

database.host = mycomputer

database.name = qvddb

database.user = qvd

database.password = passw0rd

path.log = /var/log/qvd

log.filename = ${path.log}/qvd.log

log.level = INFO

You should ensure that the nodename, database.host, database.name, database.user and database.password contain values that match the environment that you have set up.

Once these settings are in place any utility that requires access to the database will have the appropriate configuration details to do so.

The log related entries must be set here because the QVD components initialize the logging system before connecting to the database.

2.1. Other QVD Configuration Parameters

Outside of the configuration file, QVD stores the majority of its configuration options within the QVD-DB. There are a wide range of parameters that apply to different components within the QVD infrastructure. These parameters can be set using the QVD CLI Administration Utility. We discuss the appropriate steps for this in the chapter titled QVD CLI Administration Utility.

While it is possible to set any of the following configuration parameters within the node.conf file, the settings within the QVD-DB will always have precedence. This means that if a change is made to the settings contained within the database, the settings stored within the configuration file would become obsolete and confusing for future administration work. Therefore, we strongly recommend that these options are only updated within the database using the QVD CLI Administration Utility.

This section describes some of these additional configuration parameters. While there are many other settings which you will be able to view using the QVD CLI Administration Utility, some of these (such as the parameters prepended with the word internal) should never be modified unless under the expert guidance of a QVD Support Engineer. In general, we do not recommend that you change any of these configuration parameters unless you have been guided to do so, either by instruction within this manual, or by QVD Support.

Note that in order to set these parameters, you should have already installed and configured QVD-DB.

|

|

Some configuration parameters may relate to the parameters set for another component in the system. In these cases, you may need to update the parameter in more than one place. A typical example would be the l7r.port setting which would affect the client.host.port setting. |

|

|

Modifying any QVD internal parameter will void any support agreement that you have set out for QVD. These parameters are subject to change in any release of the software and are designed to help developers debug behaviour inside of the product. |

2.1.1. QVD System Paths

The following options are available to change the paths that QVD uses to search for applications, certificates and other QVD specific data.

path.run = /var/run/qvd

path.log = /var/log

path.tmp = /var/tmp

path.storage.root = /var/lib/qvd/storage

path.storage.staging = ${path.storage.root}/staging

path.storage.images = ${path.storage.root}/images

path.storage.overlays = ${path.storage.root}/overlays

path.storage.homes = ${path.storage.root}/homes

path.ssl.certs = ${path.run}/ssl

path.ssl.ca.system = /etc/ssl/certs

path.ssl.ca.personal = .qvd/certs

path.cgroup = /sys/fs/cgroup

path.cgroup.cpu.lxc = /sys/fs/cgroup/cpu/lxc

path.serial.captures = ${path.tmp}/qvd

command.kvm = kvm

command.kvm-img = kvm-img

command.nxagent = /usr/bin/nxagent

command.nxdiag = /usr/bin/nxdiag.pl

command.x-session = /etc/X11/Xsession

command.useradd = /usr/sbin/useradd

command.userdel = /usr/sbin/userdel

The above values are the default values.

-

path.run: the run path (usually referenced by other path options)

-

path.log: the base path to store log output

-

path.tmp: the path to store temporary files

-

path.storage.root: the base path for the main storage area used by QVD

-

path.storage.staging: the staging directory used to hold temporary DIs

-

path.storage.images: the images directory used to hold registered DIs

-

path.storage.overlays: the overlays directory used to hold overlay qcow images

-

path.storage.homes: the homes directory used to hold user home data qcow images

-

path.ssl.certs: the path to store SSL certificates used by QVD

-

path.ssl.ca.system: the path to where system CA certificates are stored

-

path.ssl.ca.personal: the path to where local or personal CA certificates are stored

-

path.serial.captures: the location used to store serial captures (if enabled)

-

command.kvm: the command to run the Kernel Virtual Machine

-

command.kvm-img: the command used to work with QEMU virtual disks within the KVM

-

command.nxagent: the path to the nxagent binary (usually only used by the VMA on an OSF)

-

command.nxdiag: the path to the nxdiag.pl script used by the VMA to ensure that nxagent is running properly

-

command.x-session: the path to the XSession shell script run by the system when an X Windows session is started

-

command.useradd: the path to the useradd script used by the system to add users

-

command.userdel: the path to the userdel script used by the system to remove users

2.1.2. Logging

The following options can be used to change the path to the log file and to control log level output.

path.log = /var/log

log.filename = ${path.log}/qvd.log

log.level = INFO

The above values are the default values.

-

path.log: base path for log files

-

log.filename: the path to the log file

-

log.level: the log level output, values can be: ALL, DEBUG, INFO, WARN, ERROR, FATAL, OFF

These configuration options must be set in the QVD configuration file /etc/qvd/node.conf because the logging system is initialized before connecting to the database. Values set in the QVD database using the qvd-admin tool are ignored.

QVD generates its log with Log::Log4perl which has many logging backends. You may choose send the log output to syslog, file or even to a database. In order to log to syslog, the following configuration variables can be set in node.conf:

log4perl.appender.SYSLOG = Log::Dispatch::Syslog log4perl.appender.SYSLOG.layout = Log::Log4perl::Layout::PatternLayout log4perl.appender.SYSLOG.layout.ConversionPattern = %d %P %F %L %c - %m%n log4perl.rootLogger = DEBUG, SYSLOG log.level = DEBUG

For a complete breakdown of the different logging options available to you using the log4perl module, please refer to the log4perl documentation.

If you select to log to file, ensure that you use some form of log rotation in order to control the growth of your log files.

2.1.3. L7R Configuration Options

You are able to specify the following additional options to control the L7R:

l7r.as_user = root

l7r.use_ssl = 1

l7r.port = 8443

l7r.address = *

l7r.pid_file = ${path.run}/l7r.pid

l7r.auth.plugins = default

l7r.loadbalancer.plugin = default

l7r.loadbalancer.plugin.default.weight.ram = 1

l7r.loadbalancer.plugin.default.weight.cpu = 1

l7r.loadbalancer.plugin.default.weight.random = 1

The values set above are the default values.

-

l7r.as_user: the user that should be used to run the QVD L7R daemon

-

l7r.use_ssl: whether or not to make use of SSL to encrypt client connections

-

l7r.port: the port that should be listened on for client connections to the L7R (the client.host.port setting for each client would need to be configured for this value as well)

-

l7r.address: the IP address that the L7R should bind to

-

l7r.pid_file: the path to the PID file that is created when the L7R daemon is running

-

l7r.auth.plugins: can be used to provision additional authentication plugins such as OpenSSO

-

l7r.loadbalancer.plugin: can be used to include an alternative load balancing algorithm plugin

-

l7r.loadbalancer.plugin.default.weight.ram: weight to be assigned to RAM resources for the default load balancing algorithm

-

l7r.loadbalancer.plugin.default.weight.cpu: weight to be assigned to CPU resources for the default load balancing algorithm

-

l7r.loadbalancer.plugin.default.weight.random: weight to be assigned to the randomizer for the default load balancing algorithm

2.1.4. HKD Configuration Options

You are able to specify the following additional options to control the HKD:

hkd.vm.starting.max = 6

The value set above is the default value.

-

hkd.vm.starting.max: the maximum number of virtual machines that are concurrently in the starting state that the HKD will allow before starting a new instance on a Server Node

2.1.5. VM Options

There are some options that can be set to control virtual machine behaviour within QVD:

vm.overlay.persistent = 0 vm.kvm.virtio = 1 vm.vnc.redirect = 0 vm.vnc.opts = vm.serial.redirect = 1 vm.serial.capture = 0 vm.network.ip.start = vm.network.netmask = vm.network.gateway= vm.network.bridge= vm.network.dns_server=

The values set above are the default values.

-

vm.overlay.persistent: whether to make use of persistent overlays for temporary and log files. Note that this persistent will not be persistent between nodes if the overlay is stored locally for example in a btrfs setup

-

vm.kvm.virtio: whether to make use of the virtio driver for networking (the OSF running in the image must support the virtio driver)

-

vm.vnc.redirect: enable the builtin VNC server when using KVM virtualization. (Useful for troubleshooting an image)

-

vm.vnc.opts: additional configuration for KVM’s builtin VNC server

-

vm.serial.redirect: enable serial port console when using KVM virtualization

-

vm.serial.capture: capture serial console output to file

-

vm.network.ip.start: the start IP address for the range allocated for the virtual machines on the QVD bus network

-

vm.network.netmask: CIDR netmask for the size of the QVD bus network

-

vm.network.gateway: IP of the firewall on the QVD bus network that will be passed by DHCP to the virtual machines

-

vm.network.bridge: Name of the bridge interface

-

vm.network.dns_server: IP of the DNS service to be configured by DHCP on the QVD bus network

Note that the vm.network settings are generally required in order for the QVD-Node servers to function correctly.

3. QVD-DB

The QVD-DB is the glue that ties all of the QVD components together. It makes use of an underlying PostgreSQL DBMS, and should be installed on an Ubuntu 12.04 (Precise Pangolin) GNU/Linux operating system.

All of the configuration and runtime information for the entire QVD VDI is stored in the database and if it fails the full platform will stop working. For that reason, it is highly recommended that the database is installed in a high availability configuration. You can find out how to configure PostgreSQL in an HA configuration at Linux-HA + DRBD + PostgreSQL and High Avaibility PostgreSQL HOWTO.

The actual hardware requirements for QVD-DB are very modest and any modern server with just two CPU cores and 2GB of RAM will be able to support the database load.

|

|

At the moment QVD works only with PostgreSQL 8 or 9. 9.1 is the version shipped with Ubuntu 12.04 (Precise Pangolin), whist SLES 11 provides version 8.3. |

3.1. Installing and configuring QVD-DB

On the system that you intend to install QVD-DB, you will need to add the QVD repository to your apt sources.

Firstly, add the QVD packages public key to your trusted keys (as root):

# wget -qO - https://www.theqvd.com/packages/key/public.key | sudo apt-key add -

Now, add the repository:

# echo "deb http://theqvd.com/packages/ubuntu-trusty QVD-3.5.0 main" > \ /etc/apt/sources.list.d/qvd-35.list # apt-get update

The preferred way to install the central database is with the package perl-qvd-db. It installs the PostgreSQL database system if needed, and provides the tools to provision the database. You should do this as root, as you will need to perform a number of steps that will require full root privileges:

# apt-get install perl-qvd-db

For SLES the process is similar.

Firstly, add the QVD packages public key to your trusted keys (as root):

# rpm --import https://www.theqvd.com/packages/key/public.key

Now, add the repository:

# zypper ar http://theqvd.com/packages/sles/11SP2/stable QVD # zypper ref

Use zypper to install the database:

# zypper install perl-QVD-DB

3.1.1. Creating the QVD User and Database

You will need to create a user within PostgreSQL to access the QVD Database, and you will need to create the actual database where QVD can set up its tables and store its data. To do this, you will need to use the sudo command to change to the postgres account:

$ sudo su - postgres

As the postgres user, you can create PostgreSQL user accounts with the createuser command. It will prompt for a password for the new user and ask some details on the user account. In general, you can answer n to all of the options that are presented.

For example, to create a user called qvd you would use the following command.

postgres@myserver:~$ createuser -SDRP qvd Enter password for new role: passw0rd Enter it again: passw0rd

|

|

For more information on this command, please refer to the PostgreSQL documentation at: http://www.postgresql.org/docs/9.1/static/app-createuser.html |

The new user can now be assigned as the owner of a database. To create a database for QVD and to assign ownership, use the createdb command. Use the -O switch to set the database’s owner to the account you wish to use. In this case we will set the owner to the new user that we created in the previous step.

postgres@myserver:~$ createdb -O qvd qvddb

|

|

For more information on this command, please refer to the PostgreSQL documentation at: http://www.postgresql.org/docs/9.1/static/app-createdb.html |

3.1.2. PostgreSQL Configuration Requirements

In order to support concurrent access from all the nodes in the QVD farm and handle transactions coherently, the transaction isolation level must be changed from read commited to serializable. This is a very important step that should not be ommited or your database would eventually become inconsistent and QVD fail to work.

Furthermore, it is necessary to allow network access to the database. By default, it is usually set to only listen for queries on localhost. This should be changed to listen on all interfaces.

To do this you must edit the PostgreSQL configuration files postgresql.conf and pg_hba.conf. On Ubuntu they are located in /etc/postgresql/9.1/main. On SUSE systems, you will find these files in /var/lib/pgsql/data.

The transaction isolation level is controlled with the default_transaction_isolation setting. To enable network access to PostgreSQL in general, change the listen_addresses setting from localhost to *.

root@myserver:~# cd /etc/postgresql/9.1/main #this would be /var/lib/pgsql/data on SLES root@myserver:/etc/postgresql/9.1/main# vi postgresql.conf listen_addresses = '*' default_transaction_isolation = 'serializable'

To enable network access for the user qvd, add the following line to pg_hba.conf (its format follows: host database user CIDR-address auth-method [auth-options]).

root@myserver:/etc/postgresql/9.1/main# vi pg_hba.conf host qvddb qvd 192.168.0.0/24 md5

|

|

Make sure to replace the default network 192.168.0.0/24 with the network that your QVD platform uses. |

Restart PostgreSQL for the changes to take effect.

# service postgresql restart

for Ubuntu, and for SLES:

# /etc/init.d/postrgresql restart

3.2. Provisioning QVD-DB

The QVD-DB package includes a script that will help you to provision the QVD Database with all of the tables that are required for QVD to function correctly. In order for this script to work, it requires that the QVD Database settings have been correctly entered in the /etc/qvd/node.conf file.

To provision the database, execute qvd-deploy-db.pl (found in folder /usr/lib/qvd/bin/ which you may want to add to your path if you are going to spend any length of time with QVD).

# qvd-deploy-db.pl

Once you have run this command, QVD-DB will be ready to use by any component within the QVD environment.

3.3. Testing access to QVD-DB

Any system that requires access to the database (e.g. any of the QVD Server Node components, the QVD-WAT or the CLI Administration utility) should be tested to ensure that database connectivity is available. This can be easily achieved by connecting to the database and listing the tables used by QVD. To do this, you will need to ensure that you have the PostgreSQL client installed on the host that you are connecting from. You do this by installing postgresql-client on Ubuntu, and postgresql on SLES:

Use apt-get on Ubuntu:

# sudo apt-get install postgresql-client

Use zypper on SLES:

# zypper install postgresql

To list the tables in the QVD database using the PostgreSQL client, you can do the following:

anyuser@otherserver:~$ psql -U qvd -W -h myserver qvddb

Password for user qvd:

psql (9.1.5)

qvddb=> \d

List of relations

Schema | Name | Type | Owner

--------+-----------------+----------+---------

public | configs | table | hue

public | di_properties | table | hue

public | di_tags | table | hue

public | di_tags_id_seq | sequence | hue

public | dis | table | hue

public | dis_id_seq | sequence | hue

public | host_cmds | table | hue

public | host_properties | table | hue

public | host_runtimes | table | hue

public | host_states | table | hue

public | hosts | table | hue

public | hosts_id_seq | sequence | hue

public | osf_properties | table | hue

public | osfs | table | hue

public | osfs_id_seq | sequence | hue

public | ssl_configs | table | hue

public | user_cmds | table | hue

public | user_extras | table | hue

public | user_extras_id_seq | sequence | hue

public | user_properties | table | hue

public | user_states | table | hue

public | users | table | hue

public | users_id_seq | sequence | hue

public | vm_cmds | table | hue

public | vm_properties | table | hue

public | vm_runtimes | table | hue

public | vm_states | table | hue

public | vms | table | hue

public | vms_id_seq | sequence | hue

(29 rows)

qvddb=> \q

3.4. Backing up and Restoring QVD-DB

A very simple backup technique would involve dumping the entire PostgreSQL database to file:

# pg_dump -U postgres postgres > yourfile.backup

To rollback your database to match a backup file, you can run the following command:

# psql -U postgres postgres < yourfile.backup

|

|

For advanced operations, check http://www.postgresql.org/docs/9.1/static/backup.html |

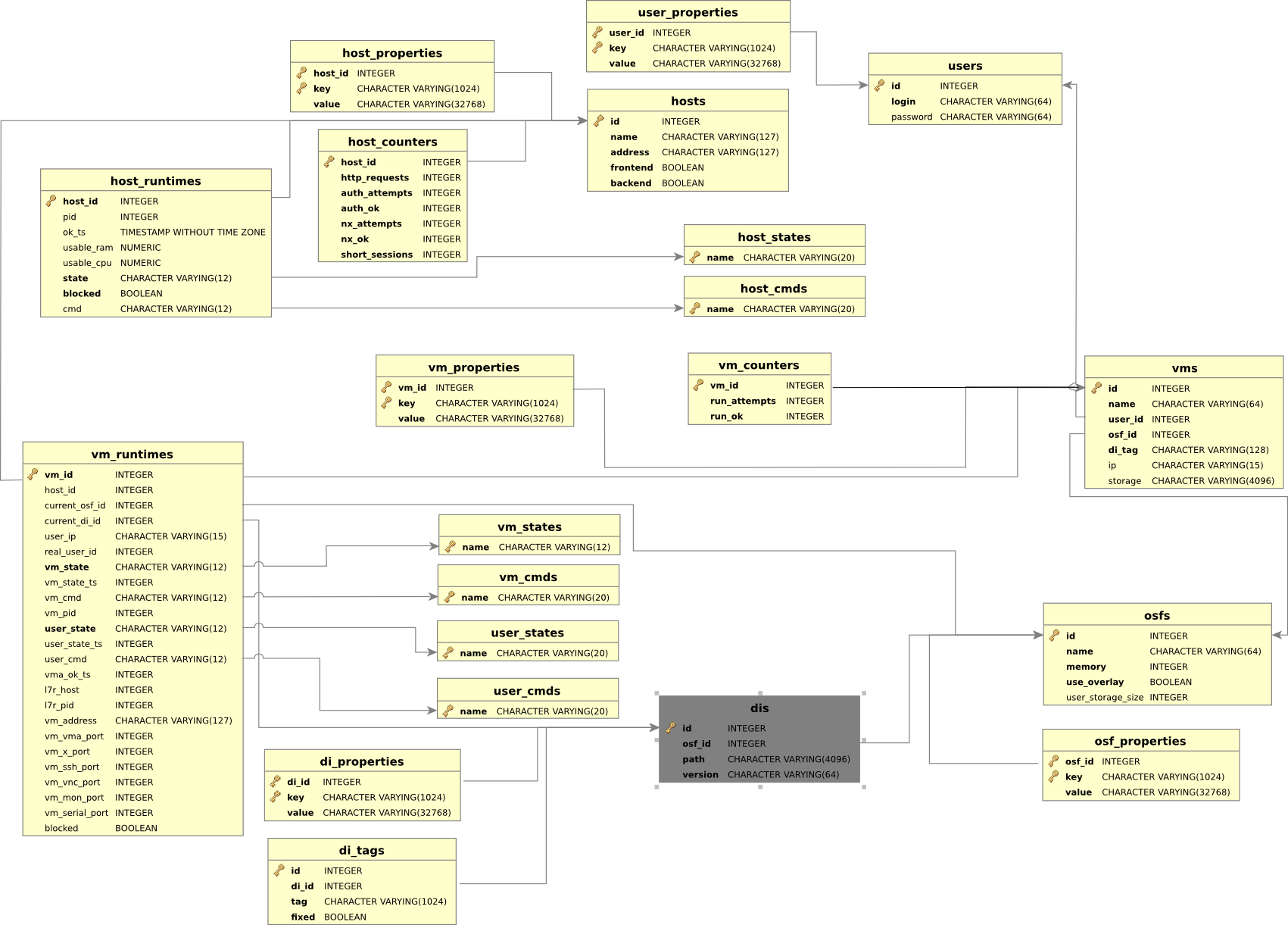

3.5. QVD-DB Data Relationship Model

The following diagram shows the general data model for data stored within the QVD-DB.

|

|

It is recommended that Administrators do not attempt to modify entries in the database directly as it is highly likely that this will break the QVD installation. Any changes made to the data stored in the database, outside of those achieved using the QVD tools, will void any support agreement. |

4. QVD Web Administration Tool

The QVD Web Administration Tool (QVD-WAT) is a web-based GUI that provides QVD system administrators with all of the tools required to administer a fully installed QVD solution. As a web-based utitlity, QVD-WAT can be used remotely to provision new users, configure virtual machines and to monitor the health of the various components within the solution.

4.1. Installing and configuring QVD-WAT

On the system that you intend to install QVD-WAT, you will need to add the QVD repository to your apt sources. For Ubuntu, you can do this as follows:

Firstly, add the QVD packages public key to your trusted keys (as root):

# wget -qO - https://www.theqvd.com/packages/key/public.key | sudo apt-key add -

Now, add the repository:

# echo "deb http://theqvd.com/packages/ubuntu-trusty QVD-3.5.0 main" > \ /etc/apt/sources.list.d/qvd-35.list # apt-get update

Before proceeding with the installation, it should be noted that as of Ubuntu 14.04, one of the dependencies of the WAT (namely, the package libapache2-mod-fastcgi) is no longer distributed among Ubuntu’s main repository. If you’re installing the QVD WAT in Ubuntu 14.04 or later, you should edit the file /etc/apt/sources.list and uncomment the lines referring to the multiverse repository, then issue an apt-get update to make APT aware of the newly available packages.

# sudo apt-get install perl-qvd-admin-web

For SLES, the process is similar.

Firstly, add the QVD packages public key to your trusted keys (as root):

# rpm --import https://www.theqvd.com/packages/key/public.key

Now, add the repository:

# zypper ar http://theqvd.com/packages/sles/11SP2/stable QVD # zypper ref

And use zypper to install QVD-WAT.

# zypper install perl-QVD-Admin-Web

The QVD-WAT requires access to the QVD Database. You will need to ensure that the QVD Node configuration file is set up correctly in order for this QVD-WAT to function properly. You can find out how to do this in chapter titled QVD Base Configuration.

As of QVD 3.1, the Web Administration Tool uses the Apache web server (as well as bundling a compatible version of libcatalyst-perl, previously a dependency). The packages provide a basic site file /etc/apache2/site-enabled/qvd-wat.conf, which you may wish to have amend if necessary, though that shouldn’t be necessary for most vanilla setups.

You will need to restart apache afterwards if you do make changes:

# service apache2 restart

or

# /etc/init.d/apache2 restart

if you are using SLES.

4.2. QVD-WAT Web Server Port

By default, QVD-WAT listens on the TCP port 3000 for incoming HTTP requests. If you are running another service on a conflicting port, or you would prefer to use some other port number for some reason, it is possible to change the port used by QVD-WAT by editing or creating /etc/default/qvd-wat. The easiest way to do this is to do:

# echo 'PORT=4000' > /etc/default/qvd-wat

Once you have changed the port, you will need to restart QVD-WAT, to access it on the different port number.

4.3. The QVD-WAT Interface

To access QVD-WAT you can open a web browser and point it at the host where you are running the QVD-WAT service. If locally, you can point your browser to http://localhost:3000/. Note that you will need to specify the port number that QVD-WAT is running on. By default, this is set to 3000, but you are able to change this.

4.3.1. Authentication

QVD-WAT currently only supports authentication for a single user. The default username is set to admin and the default password is also set to admin.

It is possible to change these values using the QVD CLI Administration Utility. On a system that has the QVD CLI Administration Utility installed, run the following commands:

# qa config set wat.admin.login=administrator # qa config set wat.admin.password=myS3cR3t

The above commands will change the username to administrator and the password to myS3cR3t.

If you are not authenticated and you connect to the QVD-WAT interface, you will be presented with a login screen.

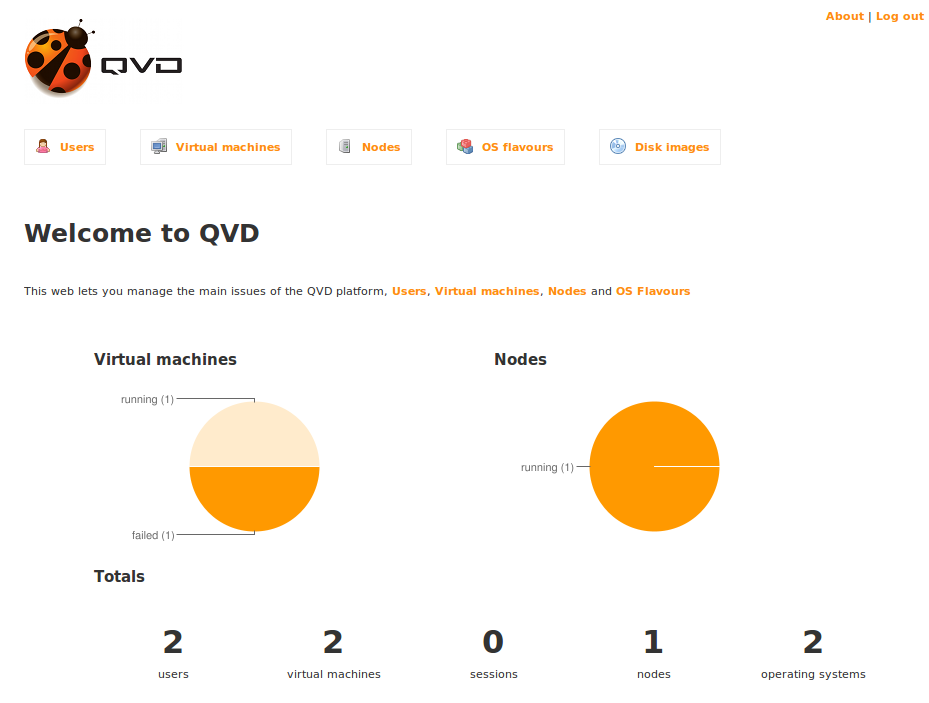

4.3.2. QVD Dashboard

After authentication the administrator is presented with the QVD Dashboard. This screen provides a quick overview of the status of the various components and elements within a QVD solution.

Pie graphs are used to display the proportion of Virtual Machines that are running, stopped or failed; and QVD Server Nodes that are running or stopped.

There is also a summary displaying counters for the numbers of users, virtual machines, actively running sessions, nodes, operating systems and disk images that are active within the QVD infrastructure.

The administrator can return to this screen by clicking on the QVD logo image on the top left of the web page, or alternately setting the browser URL back to the root of the site: http://localhost:3000/.

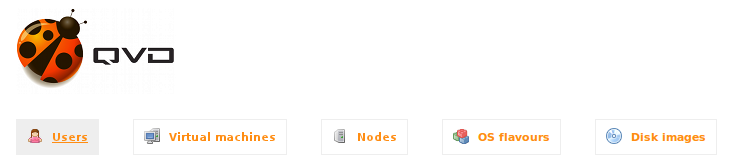

4.3.3. Navigation

Primary navigation within QVD-WAT is largely handled by a Navigation Bar that runs along the top of all web-pages.

The primary navigation links are as follows:

-

Users: http://localhost:3000/users/ - Provides the screen to manage and add users. Since virtual machines are assigned to users, the option to create a new virtual machine is provided through this channel when you choose to view details for a user.

-

Virtual machines: http://localhost:3000/vm/ - Provides a screen to administer existing virtual machines. A java applet to telnet into any virtual machine’s serial console is also available via this channel, when you choose to view the details for a particular virtual machine. Note that you can only add a virtual machine through the Users link, since they need to be assigned to a user when they are created.

-

Nodes: http://localhost:3000/hosts/ - Provides a screen to add new QVD Server Nodes to the QVD infrastructure, and to view the running state of any existing server node.

-

OS Flavours: http://localhost:3000/osf/ - Provides a screen to add operating system "flavours" to the QVD infrastructure. There is also the option to view and edit the running parameters for any existing image.

-

Disk Images: http://localhost:3000/di/ - Provides a screen to manage disk images for the OSFs. These contain the actual operating system files and directories.

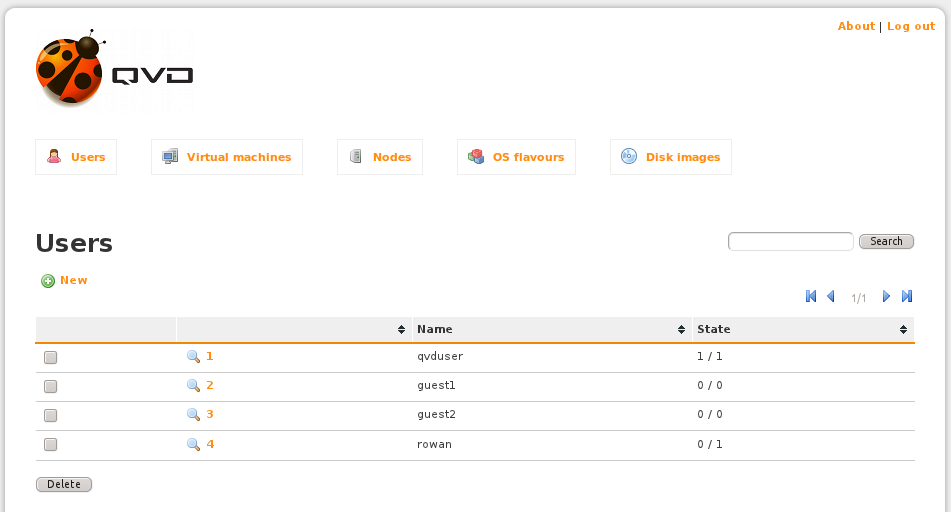

4.3.4. Users

Users are managed within QVD-WAT by clicking on the Users link in the Navigation bar, or by going to the URL http://localhost:3000/users/.

This page displays a list of users that have already been provisioned within the QVD environment. Users are listed by ID along with their login name, and their state within the environment.

Note that in the above image, users are listed as having different states. A user with state set to 0/0 has no active session and has no virtual machine assigned. A user with state set to 0/1 has a virtual machine assigned but is not currently running a session. A user with state set to 1/1 is currently running a session and has a single virtual machine assigned.

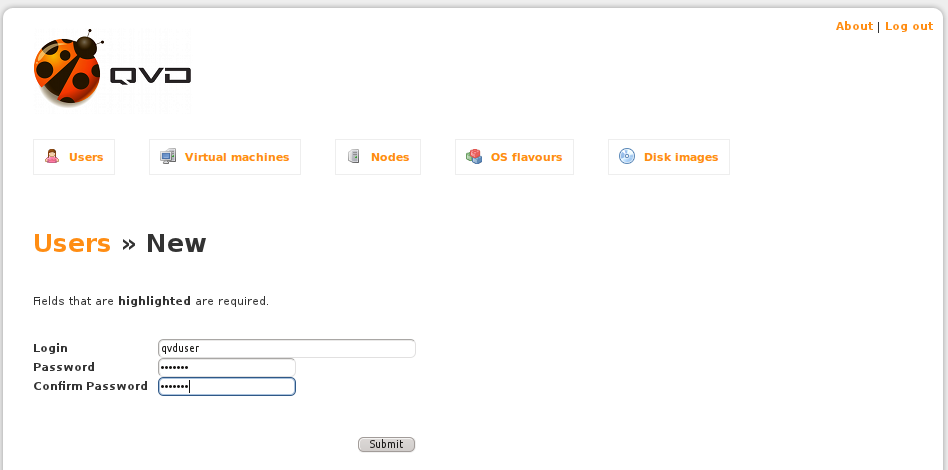

Adding a User

It is simple to add a user to the environment using QVD-WAT. On the Users page, you will

notice that there is a button

above the user list:

above the user list:

Clicking on the New button will take you to the New User page within the QVD-WAT. Here you are prompted to provide a Login username and Password and to Confirm Password.

By clicking on the Submit button, the new user will be created within the QVD database.

|

|

If you choose to make use of an external authentication mechanism such as LDAP you will still need to add the users to the QVD Database in order to be able to assign virtual machines to them. The usernames should match the entries in LDAP. The password that is stored for the user within the QVD Database will be ignored and the user will actually authenticate against the credentials stored in LDAP. |

Deleting Users

Deleting users from QVD using the QVD-WAT is simple. On the Users page, you will notice that there is a checkbox next to each user entry in the Users List. By simply checking the checkbox next to each entry that you wish to remove, you are able to select the users that should be deleted. When you have finished your selection, you can click on the Delete button at the bottom of the User List.

You will be prompted to confirm your intention to delete the users from the system. You will need to affirm your decision before the users are actually removed.

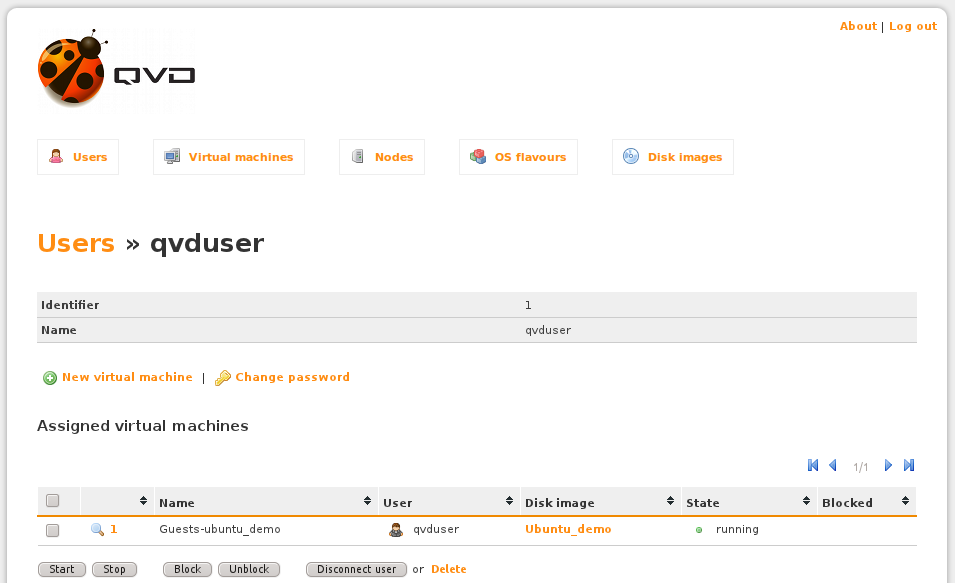

Changing a User Password

To change the password for a user, you will need to find the user in the User List

displayed on the Users Page and click on the magnifying glass icon next to the user’s

User ID

. This will

take you to the User Profile page.

. This will

take you to the User Profile page.

Here, you will be able to locate and click on the

link.

link.

This will take you to the Change Password page, where you will be able to enter a new password for the user.

|

|

If you have opted to make use of an external authentication mechanism such as LDAP, password changes performed through the QVD-WAT will not update the user’s password within the LDAP directory. |

Assigning a Virtual Machine To A User

In order for a user to be able to login to a virtual desktop environment, the user must have a

virtual machine assigned. This is easily achieved by finding the user in the User List

displayed on the Users Page. Click on the magnifying glass icon

next to the user’s User ID. This will

take you to the User Profile page.

next to the user’s User ID. This will

take you to the User Profile page.

On the User Profile page, locate and click on the

link.

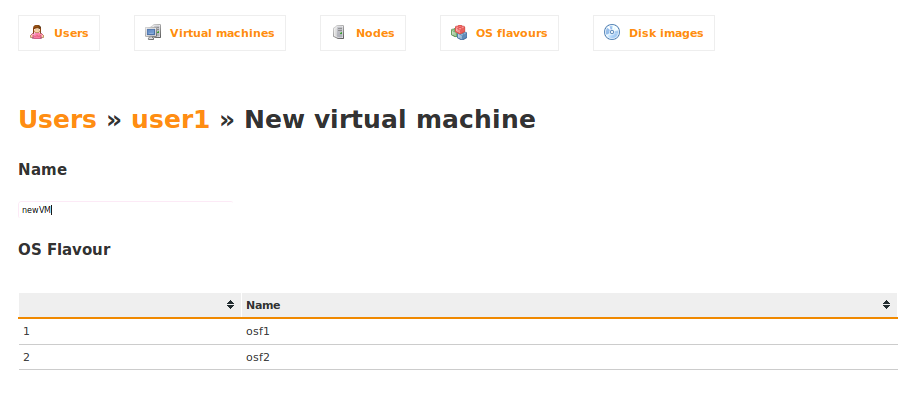

This will take you to the New Virtual Machine Page. Here you can enter a name for the Virtual Machine that

will make it easy to identify. You will then need to select which loaded OSF you would like to run within

the Virtual Machine from the list of OSFs. As soon as you click on an OSF within the list, the virtual

machine will be created and assigned to the current user.

link.

This will take you to the New Virtual Machine Page. Here you can enter a name for the Virtual Machine that

will make it easy to identify. You will then need to select which loaded OSF you would like to run within

the Virtual Machine from the list of OSFs. As soon as you click on an OSF within the list, the virtual

machine will be created and assigned to the current user.

After creating a new virtual machine, you will automatically be taken to the Virtual Machines Page.

|

|

You can assign multiple virtual machines to a single user. These may contain different OSFs, allowing the user to perform a variety of different tasks. If a user attempts to connect using the QVD Client, and multiple virtual machines are available to the user, the user will be presented with a menu of the available virtual machines to select from before the connection is established. |

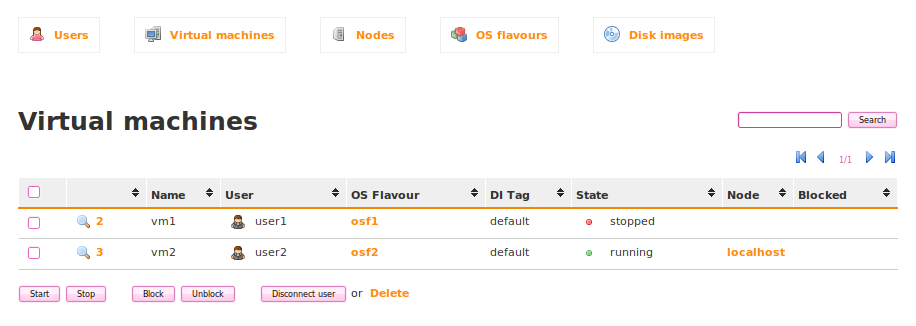

4.3.5. Virtual Machines

The Virtual Machines Page is usually accessed by clicking on the Virtual Machines link in the Navigation bar, or by going to the URL http://localhost:3000/vm/, within the QVD-WAT.

This page displays a list of Virtual Machines that have already been created and assigned to users within the QVD environment. Virtual Machines are listed by ID along with their name, the user that they have been assigned to, the OSF that they will load, their state within the environment, and the node where the virtual machine is running.

Starting and Stopping Virtual Machines

While the L7R component of any Server Node that receives an authentication request will automatically start an instance of a virtual machine for the user that has been authenticated, if one is not already running, this takes time and delays the client from presenting the desktop to the user. It is usually a good idea to start up virtual machine instances beforehand, so that users do not have to wait for an image to boot.

Starting a Virtual Machine within the QVD-WAT is trivial. Check the checkboxes next to each of the Virtual Machines that you wish to start and then click on the Start button at the bottom of the list.

|

|

If you want to start all of the listed Virtual Machines, you can click on the checkbox in the header of the table. |

While a machine is starting up, you will see that the state first changes to starting_2 and then eventually changes to running. If something goes wrong during the startup, the state will change to failed and usually the Blocked flag will be set.

To stop any Virtual Machine within the environment, follow the same procedure. Check the checkboxes for the Virtual Machines that you want to stop, and then click on the Stop button at the bottom of the list.

Virtual Machine Blocking and Unblocking

Virtual Machines can enter a Blocked state. This means that even if they are started, a user will not be able to login to the desktop using the client. Usually machines automatically enter a Blocked state if they fail to start correctly or if there is some problem either with their network configuration or with the QVD-VMA that should be running on each virtual machine. However, it is also possible to force the Blocked state using the QVD-WAT. This is usually done if an administrative task needs to be performed on the Virtual Machine, and the administrator does not want anybody to be accessing the virtual machine at the same time.

In order to Block a Virtual Machine, check the checkbox next to the Virtual Machine that you want to disable, and then click on the Block button at the bottom of the Virtual Machine list. You will be prompted to confirm that you intend this action.

Once the Virtual Machine has been blocked, you will be able to access it via the Terminal Console in order to perform maintanence.

It is equally trivial to Unblock a Virtual Machine. Check the checkbox next to the Virtual Machine that you want to enable, and then click on the Unblock button at the bottom of the Virtual Machine list. You will be prompted to confirm that you intend this action.

Unblocking a Virtual Machine that has failed to start properly will not fix the problem. It only really makes sense to Unblock a Virtual Machine if you have purposefully Blocked it or if you have just finished resolving a startup problem. If a machine is Blocked as a result of a startup failure, you will more than likely need to edit the underlying OSF.

Deleting a Virtual Machine

Since it is possible to assign more than one Virtual Machine to a user, there may be times that you wish to delete a particular virtual machine. This is a trivial action. On the Virtual Machines page, check the checkbox next to the Virtual Machine that you want to delete, and then click on the Delete link at the bottom of the Virtual Machine list. You will be prompted to confirm that you intend this action.

Disconnecting a User

During periods of maintenance, you may find that you need to disconnect users from their Virtual Machines. This can be acheived easily. On the Virtual Machines page, check the checkbox next to the Virtual Machine that you want to disconnect a user from, and then click on the Disconnect User button at the bottom of the Virtual Machine list. You will be prompted to confirm that you intend this action.

This action is performed without any warning to the user. The client will simply disconnect the moment that the command is issued. While no data will be lost, unless the Virtual Machine is restarted, the user will be unaware of the reason for the dropped connection. As a result, this action should be used with care.

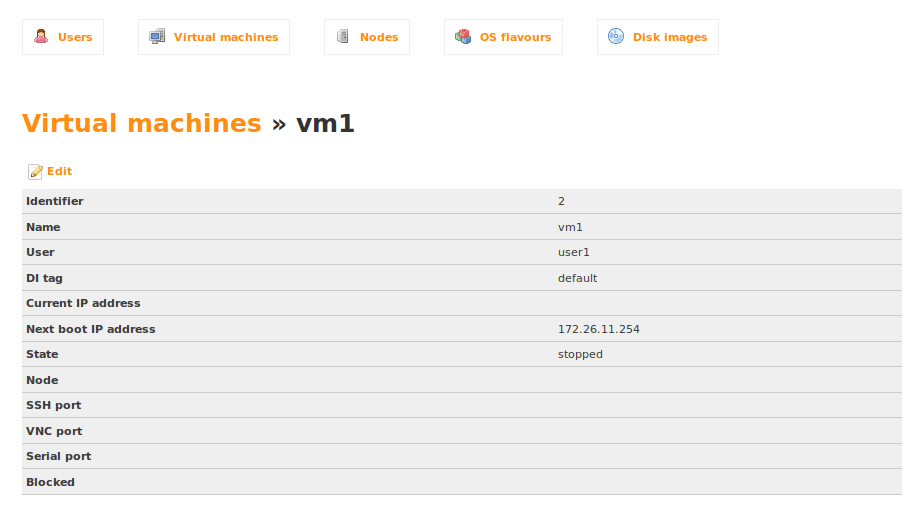

Editing Runtime Parameters

It is possible to edit the runtime parameters for any virtual machine. To do this, you will need to go to

the Virtual Machines page and find the virtual machine within the Virtual Machine List. Each Virtual Machine

entry includes the Virtual Machine identifier and a magnifying glass icon

which acts as a link through to a page

where you are able to view the current runtime parameters for that Virtual Machine.

which acts as a link through to a page

where you are able to view the current runtime parameters for that Virtual Machine.

In order to edit any of these parameters, you can click on the

button to render this page as a form that allows you to change some of the runtime options within the

virtual machine.

button to render this page as a form that allows you to change some of the runtime options within the

virtual machine.

Here you are able to change the following parameters:

- Name

-

The virtual machine name that will be listed in the QVD-WAT and that will be presented to the user in a menu if the user has more than one Virtual Machine assigned.

- DI Tag

-

The Disk Image tag this VM will use. Most of the time this will be either default or head.

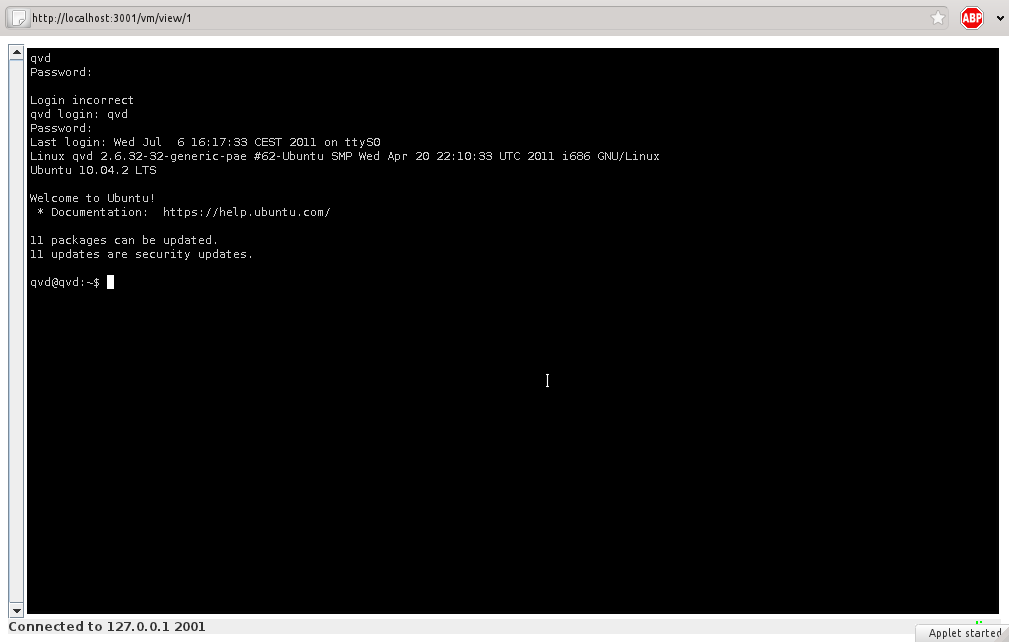

Terminal Console

At the bottom of the VM Runtime Parameters Page there is a

button.

Clicking on the Telnet Viewer button will open a separate window containing a Java applet that will automatically telnet into

the Serial Port on the Virtual Machine, allowing an Administrator to connect and to login in order to perform administrative

duties for a particular Virtual Machine.

button.

Clicking on the Telnet Viewer button will open a separate window containing a Java applet that will automatically telnet into

the Serial Port on the Virtual Machine, allowing an Administrator to connect and to login in order to perform administrative

duties for a particular Virtual Machine.

In general, major administration is directly performed on a DI, so that changes are implemented across all virtual machines sharing the same image, however there are particular instances where an Administrator may need to access a running virtual machine to help a user or to troubleshoot a problem. Most frequently, this utility will be used by an Administrator when a virtual machine fails to start correctly and enters a Blocked state.

The java Telnet applet is only provided as a convenience to remote administrators. It is equally possible to use any other standard telnet application to access the serial port used for a Virtual Machine on any QVD Server Node by specifying the IP address of the Server Node and the port number for the Serial Port.

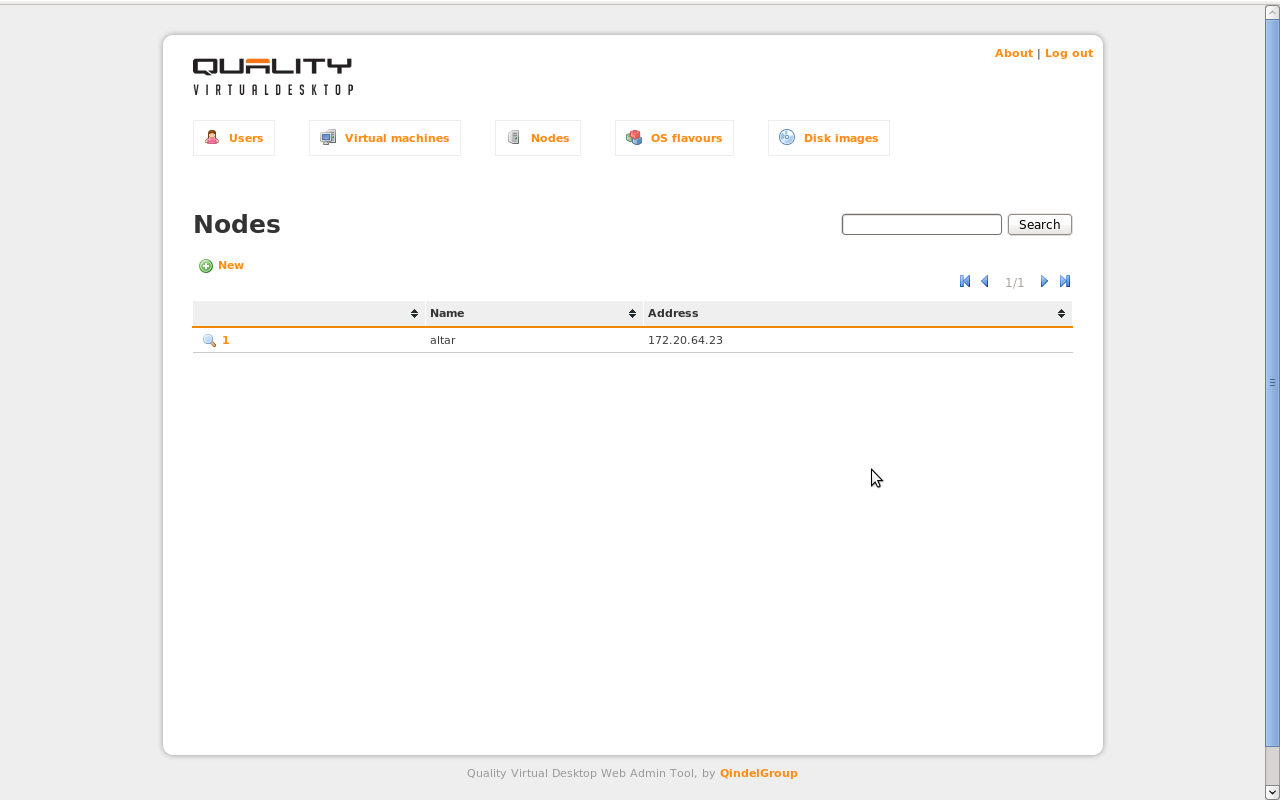

4.3.6. Nodes

The Nodes Page is usually accessed by clicking on the Nodes link in the Navigation bar, or by going to the URL http://localhost:3000/hosts/, within the QVD-WAT.

This page displays a list of QVD Server Nodes that have already been provisioned within the QVD environment. Nodes are listed by ID along with their name, IP Address, and their state within the environment.

While it is possible to click on the magnifying glass icon

next to the identifier for any node, to view the details for a particular node, you should be able

to view all of this data immediately from the Nodes Page directly in the Nodes List.

next to the identifier for any node, to view the details for a particular node, you should be able

to view all of this data immediately from the Nodes Page directly in the Nodes List.

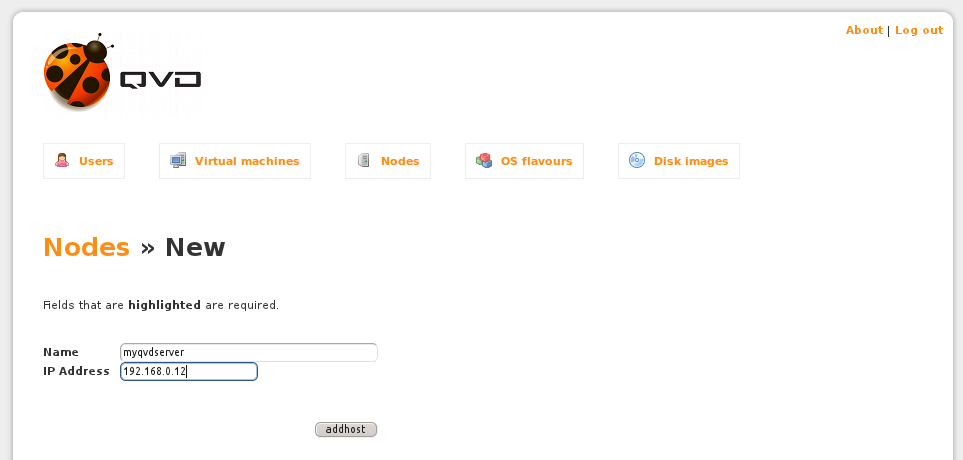

Adding Nodes

In order for a QVD Server Node to function properly within the QVD environment, it needs to be registered

within the QVD-DB. To do this, you can add the Server Node details within the QVD-WAT. Go to the Nodes Page

and click on the

button. This will take you to the

New Node Page.

button. This will take you to the

New Node Page.

On this page, you should enter a name to identify the node that you are adding (usually the hostname would be a good option) and provide the IP address for the node. Click on the addhost button to register the node within QVD-DB.

Blocking and Unblocking Nodes

Just as with Virtual Machines, it is possible to Block access to a Server Node. This will disable the Server Node from any behaviour within the QVD infrastructure. This is effectively the same as shutting down the Server Node, in the sense that to the rest of the environment the Server Node will be unavailable. If the Server Node is currently hosting any number of virtual machines, and a client attempts to connect the client will not be able to access that Virtual Machine and will receive an error notifying it that the server is currently under maintenance. Clients that are already connected to virtual machines running on a Node that has been blocked will remain connected until they are either forced to disconnect by an Administrator or they disconnect of their own accord.

To change the state of a Server Node to Blocked you can check the checkbox next to the Server Node in the Node List on the Nodes Page. Then click on the Block button at the bottom of the list.

Unblocking a Server Node is as simple as checking the checkbox next to the Server Node in the Node List on the Nodes Page and then clicking on the Unblock button at the bottom of the list.

4.3.7. OS Flavours

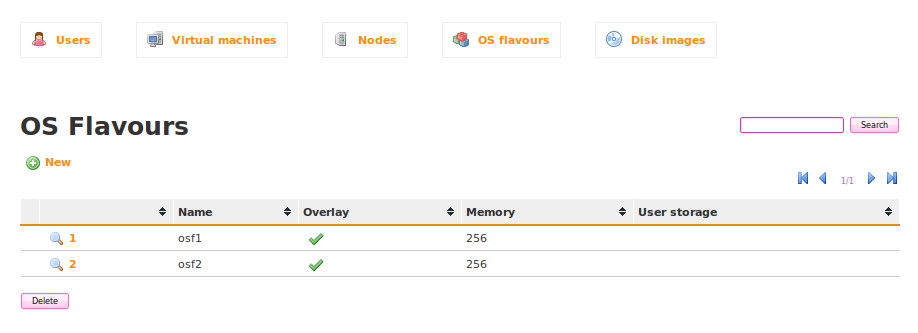

The OS Flavours Page is usually accessed by clicking on the OS Flavours link in the Navigation bar or by going to the URL http://localhost:3000/osf/, within the QVD-WAT. QVD uses an Operating System Flavour (OSF) to load into each virtual machine that it creates for every user. The OSF provides the user’s desktop environment and all of the user’s applications. This manual goes into depth about creating, editing and managing OSFs and Virtual Machines. Please refer to the part labelled Operating System Flavours and Virtual Machines for more information on this.

The OS Flavours Page lists any OSFs that are already registered into the QVD-DB. OSFs are listed by ID along with their name, whether they have overlays enabled and the memory allocated for them to run. OSFs need at least one DI (Disk Image) linked to them. This DI is which actually contains the files and directories that comprise the Operating System that is run inside the Virtual Machine.

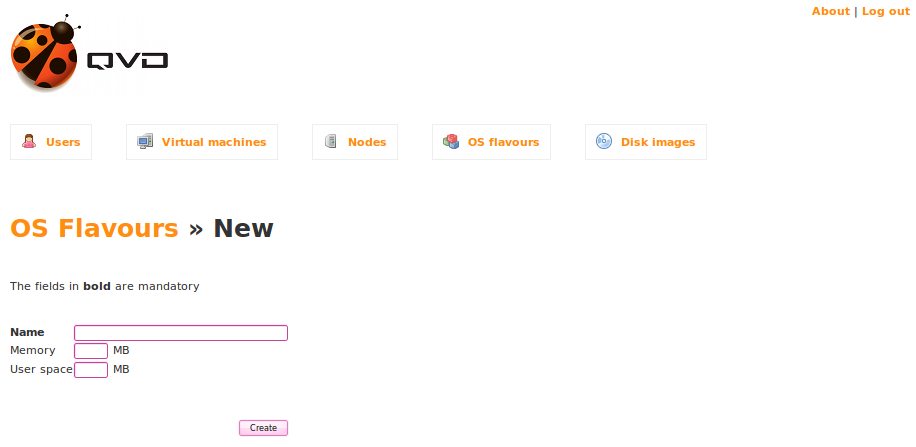

Adding an OSF

In order to use an OSF, it needs to be registered into the QVD-DB along with its runtime parameters.

To do this, you can add the OSF within the QVD-WAT. Go to the OS Flavours Page and click on the

button. This will take you to the New OSF Page.

button. This will take you to the New OSF Page.

This page presents a number of runtime parameters for the OSF that you are adding.

-

Name: This field is mandatory. You should use it to provide a name for the OSF that will allow you to identify it when adding it to a Virtual Machine or when linking a Disk Image to it.

-

Memory: This field is optional. It is used to allocate system memory to the Operating System. It has a default value of 256 MB. While the default value should be sufficient for a basic desktop, on most production systems, you would probably increase this to at least 512 MB for the Gnome or KDE desktop environment to run comfortably.

-

User space: This field is optional. It is used to allocate disk space to a user for the purpose of storing a home directory. By default, this option is usually not set and the user’s home will not be persistent. That means that if the virtual machine is restarted, any user data will be lost. Setting a value here will create a virtual disk of the size specified. This ensures that user data is persistent, and helps to enforce quotas and to prevent user home directories from unlimited growth which could impact on other users of the QVD environment.

Finally, click on the Create button to load the OSF.

Deleting an OSF

Deleting OSFs from QVD using the QVD-WAT is simple. On the OS Flavours page, you will notice that there is a checkbox next to each OSF entry in the list. By simply checking the checkbox next to each entry that you wish to remove, you are able to select the OSFs that should be deleted. When you have finished your selection, you can click on the Delete button at the bottom of the Image List.

You will be prompted to confirm your intention to delete the OSF from the system. You will need to affirm your decision before the image is actually removed.

4.3.8. Disk Images

OSFs only contain information about the Operating System that will run inside the Virtual Machine, but they don’t hold the actual Operating System. For this, a Disk Image is needed. Disk Images relate Operating System image files with OSFs, so several images files can be used with a given OSF. This mechanism allows the administrator to roll back to a previous, known-good image file if a newer one is found to have some kind of problem.

The way this works is by tagging. DIs can be tagged with several strings, and on the other hand VMs have a DI Tag field that refer to these tags. This way, when a VM starts, the Disk Image which has the specified tag is chosen.

When adding Disk Images, they are automatically tagged with a string like 2011-03-04-000. This is a unique string that identifies that DI. It contains the current date and a sequential number.

Other meaningful tags a DI can have are head and default. head is always assigned to the most recent DI. This is useful for VMs that must always run the latest image—just set their DI Tag field to head and they will always use new DIs as they are added to the system.

If this behaviour isn’t desired, you can use the tag default. This tag isn’t reassigned when DIs are added, so you can expect VMs to be using always a specific DI. Whenever the administrator tags a different DI as default, though, all VMs using the tag default will start using it from their next boot.

In the Disk Images listing in the WAT, you can see and change which image is the default for each OSF. The column Default in the listing serves both of these purposes.

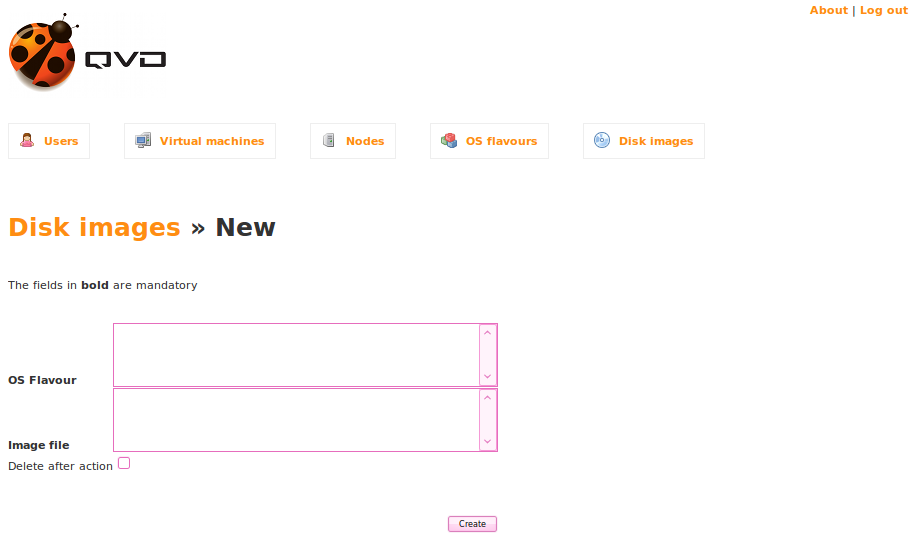

Adding an Image

Disk Images must be registered into the QVD-DB before you can make use of them. The

QVD-WAT can be used to register images in the database. Go to the Disk Images page

and click on the

button.

button.

-

OS Flavour: This field is mandatory. The list will show the existing OS Flavours in the system, and you can choose the OSF this Image will be associated to.

-

Image file: Selecting an image from the list of images is compulsory. The list will only be populated with the image files that are available within /var/lib/qvd/storage/staging. If no files are available within this directory, the field will appear empty and you will not be able to proceed from this point.

-

Delete after action: A checkbox that allows you to either delete the original image from the staging directory once it has been added, or to keep it available as a staging image. This option is available because the original image file is copied to /var/lib/qvd/storage/images once you have loaded it into QVD. You may want to delete the image file from the staging directory to save disk space, but you may equally want to reuse it with alternative memory and user space settings for another group of users. It is optional to delete the temporary image file.

Finally, click on the Create button to load the Disk Image. It may take some time to copy the image file and to update the database. Please be patient while this action completes.

Deleting an Image

When a Disk Image is no longer deemed necessary, it can be removed from the system. This can be easily done from the Disk Images page. Just as with OSFs, you can click the checkbox next to each entry that you wish to remove, then click on the Delete button at the bottom of the list.

You will be prompted to confirm your intention to delete the OSF from the system. You will need to affirm your decision before the image is actually removed.

|

|

If a copy of your image file is not available in the staging directory or in a backup, you will lose the image file completely. Since these are usually very large and take some time to create, you may want to create a backup before you proceed with this action. |

Setting default Images

As explained in Disk Images, more than one DI can be assigned to an OSF and there’s a field in each VM parameters (DI Tag) that selects which of these DIs is to be used on a per VM basis. VMs that choose the tag head will always use the latest DI in the relevant OSF. VMs that choose a given numeric tag will use it. VMs that choose the default tag will use whatever DI is set as default in each OSF.

In the listing of DIs there are some radio buttons that allows the user to select which of the DIs among each OSF has the default tag. It is best to sort the list by OSF to see this clearly. To set a given DI as default, just check its radio button (which will uncheck the currently checked DI in the same OSF) and then click on the Set defaults button. From now on, the next time a VM using that OSF and having default in its DI Tag field is started, it will use the newly selected DI.

You can change more than one DI at the same time by checking the desired radio buttons, then clicking on Set defaults once instead of changing one default DI each time.

5. QVD Server Nodes

QVD Server Nodes are the work-horses within the QVD infrastructure. The nodes are run by a single binary component, the HKD or House Keeping Daemon that tracks the status of virtual machines. The HKD is responsible for starting and stopping virtual machines. The HKD monitors the health of each virtual machine and then updates status information within the QVD Database, so that other Nodes and the administration tools are able to function accordingly. In general, the HKD is responsible for managing virtual machine status.

The HKD also invokes the L7R - A Layer-7 Router that acts as the broker within the server environment, responsible for authenticating users, establishing sessions and routing connections to the appropriate virtual IP addresses. In general, the L7R is responsible for managing user status.

Usually Server Nodes are installed across a number of systems running as a cluster. This means that within a typical deployment you are likely to have any number of Server Nodes that will need to be installed and configured.

You should familiarize yourself with the general architecture of a server node by referring to the chapter in this manual labelled Components and Architecture and in particular the subsection labelled QVD Server Architecture.

5.1. Installation of a QVD Server Node

On any of the systems that you intend to install the QVD Server Node components, you will need to add the QVD repository to your apt sources.

Firstly, add the QVD packages public key to your trusted keys (as root):

# wget -qO - https://www.theqvd.com/packages/key/public.key | sudo apt-key add -

Now, add the repository:

# echo "deb http://theqvd.com/packages/ubuntu-trusty QVD-3.5.0 main" > \ /etc/apt/sources.list.d/qvd-35.list # apt-get update

To install all of the QVD Server Node components and their dependencies, run the following command:

# apt-get install perl-qvd-node